Since my last post, I have been busy dockerizing the entire project and integrating all the sensors into ROS. Each sensor is now running in an isolated Docker container and publishing sensor data. As I am not an expert in Docker, I took a Udemy course to familiarize myself with it. Now, everything has been dockerized and organized using docker-compose.

Camera

The camera used is the IMX477 sensor with a resolution of 12.3 MP, capable of capturing 4k photos and videos. I successfully integrated it with ROS2, and it now publishes images on the “/images” topic. Although I encountered difficulties in setting up Rviz to work remotely via SSH, I managed to get rqt_image_view (a ROS program for viewing camera streams) to function properly. So now I’m able to see the camera stream live from another computer while I have an ssh tunnel open.

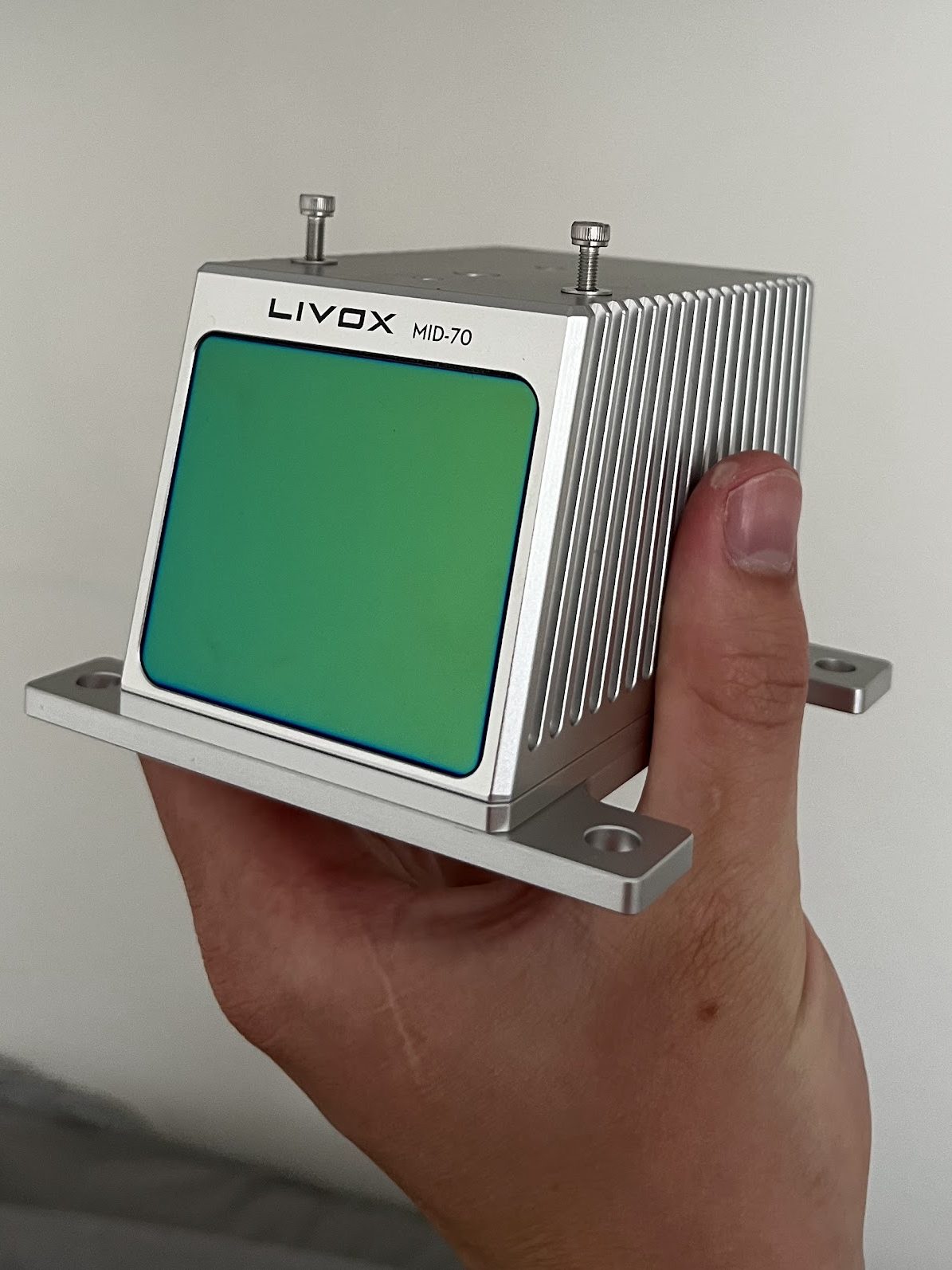

Lidar

The lidar utilized in the project is the Livox mid 70 sensor. It is capable of emitting 200,000 laser points per second within a 70-degree field of view (FOV). The accompanying video on the left is captured from my window, just like the camera video mentioned earlier. The lidar employs Ethernet for data transfer. It is connected to a Docker container running ROS1 Noetic, which publishes the lidar data in the form of point clouds. The reason for utilizing ROS1 instead of ROS2 is the implementation of mapping and localization using LIO-SAM, which is specifically designed for ROS1. However, in the future, I plan to utilize ROS bridge to access the point clouds in ROS2 and develop a node that generates global and local costmaps for the NAV2 stack. Below is a demonstration showcasing the pattern employed by the lidar to ensure complete coverage while emitting laser points.

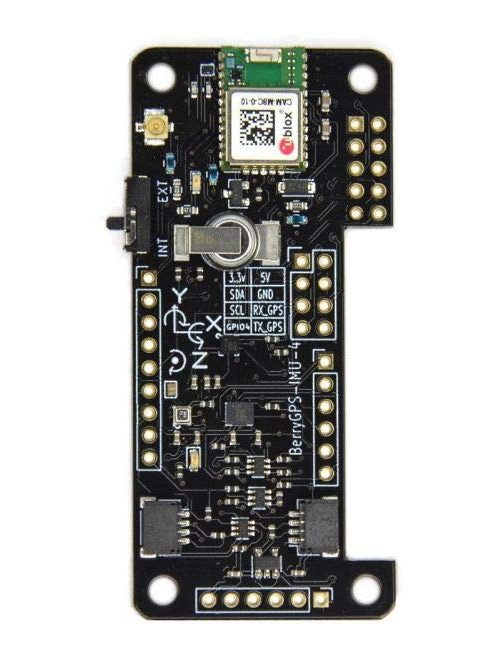

IMU

The IMU utilized is a BerryGPS-IMU V2 salvaged from a previous drone project. It is an inexpensive sensor with limited specs. Unfortunately, it tends to produce a significant amount of noisy data. To address this issue, I plan to implement a Kalman filter and hope that it improves the quality of the data enough for mapping and localization purposes. The IMU is a 9-axis sensor, incorporating a 3-axis gyroscope, 3-axis accelerometer, and a 3-axis compass all integrated on the same chip. It will be directly mounted on top of the lidar.

Updates

Recently, I placed an order for two new worm gear motors, each with a power output of 200 Watts. The plan is to connect each motor to the rear wheel axle using chains and sprockets, and then establish another connection between the rear and front axles using additional chains and sprockets. The primary reason for acquiring these new motors was to address a design flaw identified in last year’s model. Previously, the robot would begin rolling when placed on a slight incline. However, this issue will be resolved by incorporating the RV30 gearbox, which ensures that the motor can only be turned by the motor itself and not by the motion of the wheels.

In addition, I acquired a Jetson Nano with 4GB of RAM to replace my old Raspberry Pi 3B. This upgrade significantly enhances the computing power of the robot. Now, building Docker containers takes only a fraction of the previous time, and I can effortlessly run multiple containers simultaneously for sensor reading without encountering any issues.

Thoughts

The plan is to have the robot in Borås, where I have access to forests and terrains, while I’m living in Linköping. My initial idea was to install a long-range WiFi antenna in Borås, where the robot will be located. However, the prices for point-to-point WiFi repeaters are quite high, and most of them have a maximum range of 500 meters. Currently, I believe the best solution is to acquire a used 4G router with a SIM card and try to use the same subscription as my mobile plan, where I have unlimited surf.

Next up

I intend to work on the physical robot, which includes tasks like preparing and painting metal sheets for the sides. Furthermore, I plan to design and construct a new mount for the lidar and camera, initiate the assembly of chains and sprockets, and begin the implementation of mapping and localization.

2 responses to “Dockerize everything!”

Is there a post/page for more background on this project?

Like goals, limitations, budget, GitHub(?) etc.

Interested in following this journey!

Currently, this is the only page for the project, maybe in the future I will create an information page about the project. The goal is to make the robot be able too navigate on a forest road all on its own. Where I just select a point on a map, and it will go to the point and back, and at the same time map the world. I haven’t given the budget too much thought, I have already bought most of the stuff and hope to be able to finish the first goal with the current gear. Also, the GitHub is private. I think I will keep it that way for now.